Project 5

Robot Arm

Use Quanser's QArm Mini and cloud AI tooling to rebuild the tower and perform reliable handoffs between adjacent projects.

Overview

The Robot Arm project uses Quanser’s QArm Mini platform, with each of the six teams receiving exclusive access to one arm for the duration of the hackathon. Google Cloud is the expected platform for the AI side of this project, and teams will have access to event-day credits to support that work.

The aim of the project is to show how modern agentic AI can be combined with a robotic arm to carry out repeatable, real-world manipulation tasks. In this project, that has been distilled into two core objectives: manipulating an object and the stacking of blocks.

Within the wider hackathon cycle, the robot arm will act as a key stage, rebuilding the block tower after the catapult stage. It will also act as an intermediary link between each project, transferring the angry bird through the intended connection sequence Project 3 (Platformer) -> Project 2 (Catapult) -> Project 5 (Robot Arm) -> Project 4 (Swarm) -> Project 1 (Rover) -> Project 6 (Creative) -> back to Project 3 (Platformer).

Objectives

Teams must build a system that achieves two core objectives.

1. Rebuild the Tower

The primary objective is to reconstruct a tall, stable block tower after it has been knocked down by the catapult stage. The system should reset this part of the course in a consistent and repeatable way so that the wider hackathon cycle can continue without manual intervention.

2. Link the Projects Together (Inter-Project Handoff)

The second objective is to use the robot arm as a reliable handoff point between adjacent systems. Teams should design a workflow that can receive the angry bird from one stage and place it accurately for the next. This objective should demonstrate both accurate object handling and effective coordination with the surrounding projects.

The intended inter-project connection order is:

Project 3 (Platformer) -> Project 2 (Catapult) -> Project 5 (Robot Arm) -> Project 4 (Swarm) -> Project 1 (Rover) -> Project 6 (Creative) -> back to Project 3 (Platformer)

For the Robot Arm page specifically, that means the handoff path is:

- receive from

Project 2 (Catapult) - place for

Project 4 (Swarm)

Quickstart

- Get the arm calibrated and the workspace bounded before you add cloud logic or complex task sequencing.

- Prove one repeatable pickup-and-place cycle with a single object before you attempt tower rebuilding or full handoffs.

- Decide the neighboring project interfaces you need to support in the fixed connection order: receive from

Project 2 (Catapult)and place forProject 4 (Swarm). - Collect Google Cloud credits from a project supervisor on the day by scanning the provided QR code into the Google account you want to use.

Google Cloud Workflow

Teams are expected to use Google Cloud for the AI part of this project. In practice that usually means Gemini and Vertex AI for perception or decision-making, plus other Google Cloud services if you need remote compute, hosting, or hardware coordination.

- The event has

100Google Cloud credits available in total. - One credit is usually claimed per person, and an average team will often use

1to4credits in total if several members scan separately. - Each credit is worth

$5of Google Cloud usage. - Credits can be used for Gemini and other Google AI services, but they can also be spent on CPUs, GPUs, Cloud Run, and other remote processing if your robot pipeline needs it.

- If one account runs out, multiple credits can be applied to the same Google account.

- You do not type in a manual code: scan the QR code and the credit is applied automatically to your Google account.

Materials Provided

- Quanser’s QArm Mini platform

- Google Cloud credits will be distributed by project supervisors on the day via QR code.

- Vertex AI and Gemini: Run high-performance multimodal models to give your robot the ability to see, hear, and reason.

- Vision API: Identify objects, detect faces, and read text from your robot’s camera feed in real time.

- Cloud Run: Deploy your web applications or bot controllers instantly in a fully managed environment.

- BigQuery: Store and analyse vast amounts of sensor data to find patterns or train your own custom models.

- Cloud Functions: Create event-driven triggers that let your hardware respond to cloud-based signals automatically.

- Compute Engine: Access high-power virtual machines and GPUs for intensive training or simulation tasks.

- Access to the Makerspace to develop the inter-project handoff systems. This includes 3D printers, Laser Cutting and other materials.

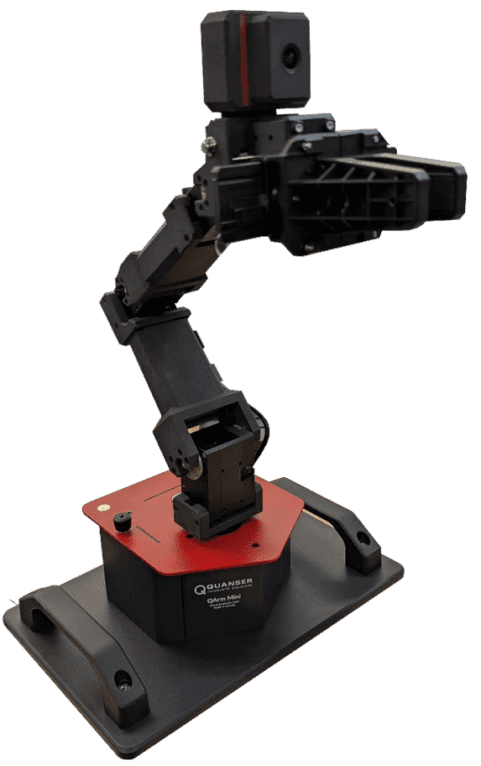

Quanser QArm Mini Notes

The official Quanser product material positions the QArm Mini as a compact teaching manipulator for introductory robotics, so teams should aim for controlled tabletop pick-and-place and repeatable handoff behaviours rather than large, high-payload motions.

Platform Snapshot

- 4 DOF arm with base yaw, shoulder/elbow/wrist pitch, and a parallel gripper

- Triple-parallel pitch arrangement that helps maintain picked-object orientation through sagittal-plane motion

- Approximate arm reach of

382 mm, gripper grasp of70 mm, and maximum payload of150 g - Joint sensing for position, speed, and current monitoring, which can also support payload-estimation workflows

- Post-wrist RGB camera with two swivel configurations at up to

1280 x 720and60 Hz - Plug-and-play USB connection for desktop use, with support across

Python,MATLAB,Simulink, andROS 2

Useful Courseware Ideas

- Forward, inverse, and differential kinematics are directly relevant for repeatable tower rebuilding and handoff positioning.

- Visual servoing and object detection are a strong fit for block pickup, target alignment, and bird transfer.

- Current-based payload sensing is useful if teams want to detect whether a block or bird has actually been gripped.

- Path and collision monitoring matter here because the arm needs to work near surrounding project hardware and custom fixtures.

- Quanser’s robotics guide presents the platform as part of a broader open-architecture workflow, so a good event-day approach is to lock down calibration and workspace limits first, then layer camera logic and task sequencing on top.

Common Failure Points

- trying to solve cloud integration before the arm is calibrated and repeatable

- leaving handoff geometry and fixtures undefined until late in the event

- assuming the gripper can tolerate an object or fixture without testing it

- treating adjacent project interfaces as ad hoc instead of designing around the fixed connection order

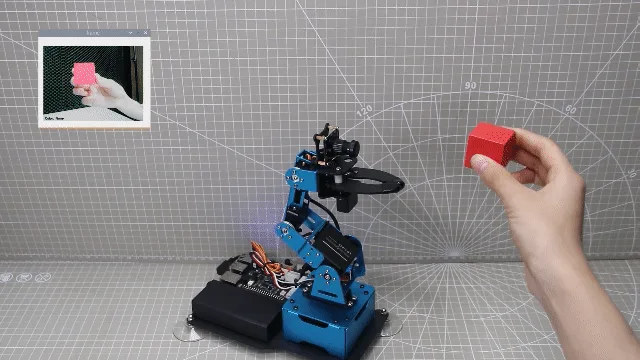

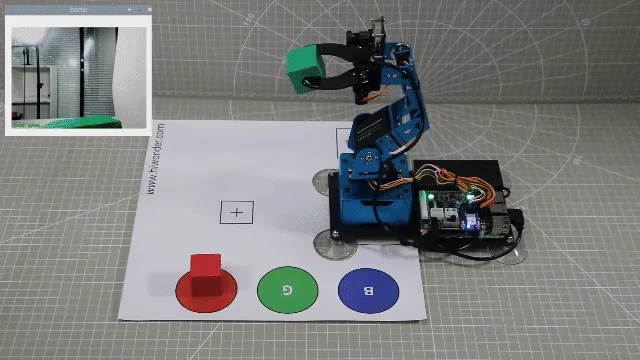

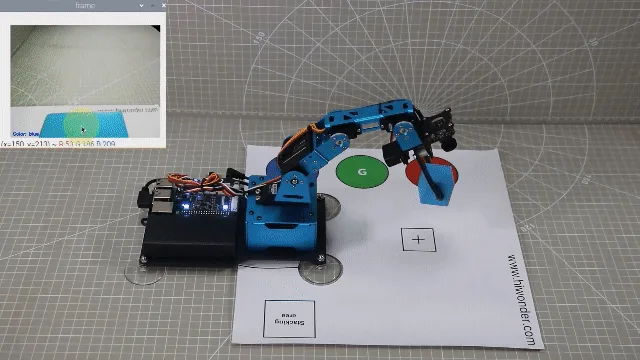

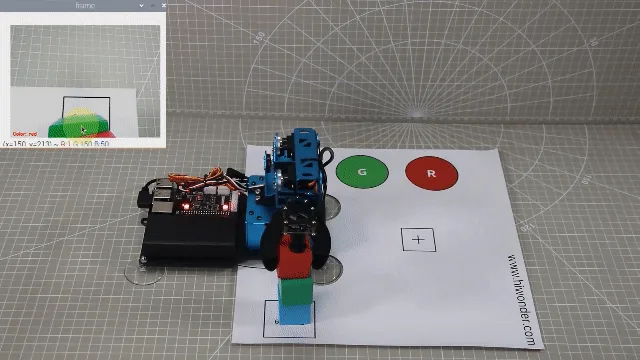

Reference Gallery

Block Handling Examples

These external reference stills show the kind of vision-guided pickup, transfer, and stacking workflow this project is aiming for. Treat them as task reference only, not as a required hardware layout or fixture design.

Tower Scoring Criteria (60 points)

Tower Height (30 points)

Tower Height will be scored comparatively across all teams. Points will be awarded based on the highest valid tower achieved across three attempts. Height will be measured by the number of completed stacked levels.

For a tower to be considered valid, it must remain standing unsupported for at least 5 seconds after the build is complete.

Each team’s best valid attempt will be used for scoring. Teams will then be ranked from tallest valid tower to shortest valid tower, and points will be awarded as follows:

| Place | Points |

|---|---|

| 1st place | 30 points |

| 2nd place | 25 points |

| 3rd place | 20 points |

| 4th place | 15 points |

| 5th place | 10 points |

| 6th place | 5 points |

If a team does not achieve a valid tower, they will receive 0 points for this section. If two teams achieve the same tower height, the team with the faster valid completion time will place higher.

Repeatability (30 points)

Repeatability measures how consistently a team can rebuild a valid tower across repeated judged attempts. This category will be scored using the same three official runs used for the Tower Height section.

A run will be considered successful if the robot rebuilds a valid tower that remains standing unsupported for at least 5 seconds, with no manual intervention during the attempt.

Points will be awarded as follows:

| Outcome | Points |

|---|---|

| 3 successful runs | 30 points |

| 2 successful runs | 20 points |

| 1 successful run | 10 points |

| 0 successful runs | 0 points |

This score is independent of Tower Height. Teams do not need to match their tallest tower on every run; each run only needs to result in a valid tower.

Inter-Project Handoff Scoring Criteria (40 points)

Successful Handoff Scenarios (20 points)

Teams may earn up to 20 points for successfully implementing inter-project handoffs.

| Scenario | Points |

|---|---|

| 10 points for the first successful handoff scenario | 10 points |

| 10 points for the second successful handoff scenario | 10 points |

| Maximum of 20 points total | 20 points total |

A handoff scenario will be considered successful if the robot arm receives the angry bird from Project 2 (Catapult) and places it for Project 4 (Swarm) as part of the intended connection order listed above, with no manual intervention during the attempt. Teams are recommended to design a 3D printed mount that’s easy to attach to the projects if needed.

Repeatability (10 points)

Teams will be awarded points based on how reliably their chosen handoff scenarios can be repeated.

| Outcome | Points |

|---|---|

| 10 points: handoff system works reliably across repeated attempts | 10 points |

| 5 points: handoff system works inconsistently, with partial repeatability | 5 points |

| 0 points: handoff system cannot be repeated reliably | 0 points |

Judges will assess repeatability based on whether the demonstrated handoff process can be reproduced with similar results across multiple runs.

Judge Assessment: Approach and Integration (10 points)

The remaining 10 points will be awarded qualitatively by the judges based on the quality of the team’s overall approach.

Judges will consider:

- how well the robot arm integrates with the surrounding projects

- the quality and robustness of the handoff strategy

- how clearly the system has been designed for use within the wider hackathon cycle

- the effectiveness of the team’s technical approach to coordination, positioning, and transfer